The Problem

During a recent Java Portlets related project we were faced with a simple requirement that created us some trouble to solve it.

The request this simple: we have to share some parameter between portlets defined in different pages of a GateIn based portal.

Apparently this task was harder than expected. In particular the greatest frustration was related to the inability to simply inject URL parameters, the easiest mechanism that many web technologies offers to pass non-critical values from one page to another.

When we tried this simple approach:

@Override

public void processAction(ActionRequest request, ActionResponse response)

throws PortletException, PortletSecurityException, IOException {

LOGGER.info("Invoked Action Phase");

response.setRenderParameter("prp", "#######################");

response.sendRedirect("/sample-portal/classic/getterPage");

}

But when the code was executed we were seeing this error in the logs:

14:37:32,455 ERROR [portal:UIPortletLifecycle] (http--127.0.0.1-8080-1) Error processing the action: sendRedirect cannot be called after setPortletMode/setWindowState/setRenderParameter/setRenderParameters has been called previously: java.lang.IllegalStateException: sendRedirect cannot be called after setPortletMode/setWindowState/setRenderParameter/setRenderParameters has been called previously

at org.gatein.pc.portlet.impl.jsr168.api.StateAwareResponseImpl.checkRedirect(StateAwareResponseImpl.java:120) [pc-portlet-2.4.0.Final.jar:2.4.0.Final]

...

We are used to accept specifications limits but we are also all used to exceptional requests from our customers. So my task was to trying to find a solution to this problem.

A solution : a Servlet Filter + WrappedResponse

I could have probably have found some other way to reach what we wanted, but I had a certain amount of fun playing with the abstraction layers that Servlet offer us.

One of the main reason while we are receiving that exception is because we can not trigger a redirect on a response object if the response has already started to stream the answer to the client.

Another typical exception the you could have encounter when you are playing with these aspects is:

java.lang.IllegalStateException: Response already committed

More in general I have seen this behaviour happening in other technologies as well, like when in PHP you try to wrtie a cookie after you have already started to send some output to a client.

Since the limitation we have to find some way to deviate from this behaviour to allow us to perform our redirect and still accept our parameters.

One standard and interesting way to "extend" the default behaviour of Servlet based applications is via Filters.

We can think to inject our custom behaviour to modify the normal workflow of any application. We just have to pay attention to not break anything!

Here comes our filter:

public class PortletRedirectFilter implements javax.servlet.Filter {

private static final Logger LOGGER = Logger

.getLogger(PortletRedirectFilter.class);

private FilterConfig filterConfig = null;

public void doFilter(ServletRequest request, ServletResponse response,

FilterChain chain) throws IOException, ServletException {

LOGGER.info("started filtering all urls defined in the filter url mapping ");

if (request instanceof HttpServletRequest) {

HttpServletRequest hsreq = (HttpServletRequest) request;

// search for a GET parameter called as defined in REDIRECT_TO

// variable

String destinationUrl = hsreq.getParameter(Constants.REDIRECT_TO);

if (destinationUrl != null) {

LOGGER.info("found a redirect request " + destinationUrl);

// creates the HttpResponseWrapper that will buffer the answer

// in memory

DelayedHttpServletResponse delayedResponse = new DelayedHttpServletResponse(

(HttpServletResponse) response);

// forward the call to the subsequent actions that could modify

// externals or global scope variables

chain.doFilter(request, delayedResponse);

// fire the redirection on the original response object

HttpServletResponse hsres = (HttpServletResponse) response;

hsres.sendRedirect(destinationUrl);

} else {

LOGGER.info("no redirection defined");

chain.doFilter(request, response);

}

} else {

LOGGER.info("filter invoked outside the portal scope");

chain.doFilter(request, response);

}

}

...

As you can see the logic inside the filter is not particularly complex. We start checking for the right kind of Request object since we need to cast it to HttpServletRequest to be able to extract GET parameters from them.

After this cast we look for a specific GET parameter, that we will use in our portlet for the only purpose of specifying the address we want to redirect to.

Nothing will happen in case we won't find the redirect parameter set, so the filter will implement the typical behaviour to forward to the eventual other filters in the chain.

But the real interesting behaviour is defined when we identify the presence of the redirect parameter.

If we would limit ourself to forward the original Response object we will received the error we are trying to avoid. Our solution is to wrap the Response object that we are forwarding to the other filters in a WrappedResponse that will buffer the response so that it won't be streamed to the client but will stay in memory.

After the other filters complete their job we can then safely issue a redirect instruction that won't be rejected since we are firing it on a fresh Response object and not on one that has already been used by other components.

We now only need to uncover the implementation of DelayedHttpServletResponse and of its helper class ServletOutputStreamImpl:

public class DelayedHttpServletResponse extends HttpServletResponseWrapper {

protected HttpServletResponse origResponse = null;

protected OutputStream temporaryOutputStream = null;

protected ServletOutputStream bufferedServletStream = null;

protected PrintWriter writer = null;

public DelayedHttpServletResponse(HttpServletResponse response) {

super(response);

origResponse = response;

}

protected ServletOutputStream createOutputStream() throws IOException {

try {

temporaryOutputStream = new ByteArrayOutputStream();

return new ServletOutputStreamImpl(temporaryOutputStream);

} catch (Exception ex) {

throw new IOException("Unable to construct servlet output stream: "

+ ex.getMessage(), ex);

}

}

@Override

public ServletOutputStream getOutputStream() throws IOException {

if (bufferedServletStream == null) {

bufferedServletStream = createOutputStream();

}

return bufferedServletStream;

}

@Override

public PrintWriter getWriter() throws IOException {

if (writer != null) {

return (writer);

}

bufferedServletStream = getOutputStream();

writer = new PrintWriter(new OutputStreamWriter(bufferedServletStream,

"UTF-8"));

return writer;

}

}

DelayedHttpServletResponse implements the Decorator pattern around HttpServletResponse and what it does is keeping a reference to the original Response object that is decorating and instantiating a separated OutputStream that all the components that use ServletResponse object want to use.

This OutputStream will write to an in memory buffer that will not reach the client but that will enable the server to keep on processing the call and generating all the server side interaction related to the client session.

Implementation of ServletOutputStreamImpl is not particularly interesting and is a basic (and possibly incomplete) implementation of ServletOutputStream abstract class:

public class ServletOutputStreamImpl extends ServletOutputStream {

OutputStream _out;

boolean closed = false;

public ServletOutputStreamImpl(OutputStream realStream) {

this._out = realStream;

}

@Override

public void close() throws IOException {

if (closed) {

throw new IOException("This output stream has already been closed");

}

_out.flush();

_out.close();

closed = true;

}

@Override

public void flush() throws IOException {

if (closed) {

throw new IOException("Cannot flush a closed output stream");

}

_out.flush();

}

@Override

public void write(int b) throws IOException {

if (closed) {

throw new IOException("Cannot write to a closed output stream");

}

_out.write((byte) b);

}

@Override

public void write(byte b[]) throws IOException {

write(b, 0, b.length);

}

@Override

public void write(byte b[], int off, int len) throws IOException {

if (closed) {

throw new IOException("Cannot write to a closed output stream");

}

_out.write(b, off, len);

}

}

This is all the code that we need to enable the required behaviour. What remains left is registering the filter.

We are going to configure GateIn web descriptor, portlet-redirect/war/src/main/webapp/WEB-INF/web.xml

<!-- Added to allow redirection of calls after Public Render Parameters have been already setted.-->

<filter>

<filter-name>RedirectFilter</filter-name>

<filter-class>paolo.test.portal.servletfilter.PortletRedirectFilter</filter-class>

</filter>

<filter-mapping>

<filter-name>RedirectFilter</filter-name>

<url-pattern>/*</url-pattern>

</filter-mapping>

Remember to declare it as the first filter-mapping so that it will be executed as first, and all the subsequent filters will receive the BufferedResponse object.

And now you can do something like this in your portlet to use the filter:

@Override

protected void doView(RenderRequest request, RenderResponse response)

throws PortletException, IOException, UnavailableException {

LOGGER.info("Invoked Display Phase");

response.setContentType("text/html");

PrintWriter writer = response.getWriter();

/**

* generates a link to this same portlet instance, that will trigger the

* processAction method that will be responsible of setting the public

* render paramter

*/

PortletURL portalURL = response.createActionURL();

String requiredDestination = "/sample-portal/classic/getterPage";

String url = addRedirectInfo(portalURL, requiredDestination);

writer.write(String

.format("<br/><A href='%s' style='text-decoration:underline;'>REDIRECT to %s and set PublicRenderParameters</A><br/><br/>",

url, requiredDestination));

LOGGER.info("Generated url with redirect parameters");

writer.close();

}

/**

* Helper local macro that add UC_REDIRECT_TO GET parameter to the Url of a

* Link

*

* @param u

* @param redirectTo

* @return

*/

private String addRedirectInfo(PortletURL u, String redirectTo) {

String result = u.toString();

result += String.format("&%s=%s", Constants.REDIRECT_TO, redirectTo);

return result;

}

/*

* sets the public render paramter

*/

@Override

public void processAction(ActionRequest request, ActionResponse response)

throws PortletException, PortletSecurityException, IOException {

LOGGER.info("Invoked Action Phase");

response.setRenderParameter("prp", "#######################");

}

You will see that you will be able to set the Render Parameter during the Action phase and you wil be able to specify during the RenderPhase the Parameter that will trigger the filter to issue a redirect.

Files

I have created a repo with a working sample portal, that defines a couple of portal pages, some portlet and the filter itself so that you will be able to verify the behaviour and playing the the application.

https://github.com/paoloantinori/gate-in-portlet-portlet-redirect-filter

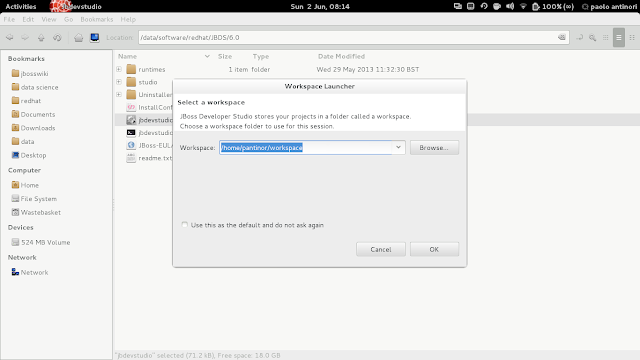

In the README.md you will find the original instruction from the GateIn project to build and deploy the project on JBoss AS 7. In particular pay attention to the section from standalone.xml that you are required to uncomment to enable the configuration that the sample portal relies on.

My code additions does not require any extra configuration.

The portal I created is based on GateIn sample portal quickstart that you can find here:

https://github.com/paoloantinori/gate-in-portlet-portlet-redirect-filter

If you clone GateIn repo remember to switch to 3.5.0.Final tag, so that you will be working with a stable version that you can match with the full GateIn distribution + JBoss AS 7 that you can download from here:

https://github.com/paoloantinori/gate-in-portlet-portlet-redirect-filter